All in One View

Content from Introduction to R and RStudio

Last updated on 2026-04-23 | Edit this page

Overview

Questions

- Why should you use

RandRStudio? - How do you get started working in

RandRStudio?

Objectives

- Understand the difference between

RandRStudio - Describe the purpose of the different

RStudiopanes - Organize files and directories into

RProjects

Acknowledgement

This workshop was adapted using material from the Data Carpentry

lessons R for Ecologists,

specifically introduction-r-rstudio.

Other Materials

What are R and RStudio?

R refers to a programming language as well as the

software that runs R code.

RStudio

is a software interface that can make it easier to write R scripts and

interact with the R software. It’s a very popular platform,

and RStudio also maintains the tidyverse series of

packages we will use in these workshops.

Why learn R?

You’re working on a project when your advisor suggests that you begin working with one of their long-time collaborators. According to your advisor, this collaborator is very talented, but only speaks a language that you don’t know. Your advisor assures you that this is ok, the collaborator won’t judge you for starting to learn the language, and will happily answer your questions. However, the collaborator is also quite pedantic. While they don’t mind that you don’t speak their language fluently yet, they are always going to answer you quite literally.

You decide to reach out to the collaborator. You find that they email you back very quickly, almost immediately most of the time. Since you’re just learning their language, you often make mistakes. Sometimes, they tell you that you’ve made a grammatical error or warn you that what you asked for doesn’t make a lot of sense. Sometimes these warnings are difficult to understand, because you don’t really have a grasp of the underlying grammar. Sometimes you get an answer back, with no warnings, but you realize that it doesn’t make sense, because what you asked for isn’t quite what you wanted. Since this collaborator responds almost immediately, without tiring, you can quickly reformulate your question and send it again.

In this way, you begin to learn the language your collaborator speaks, as well as the particular way they think about your work. Eventually, the two of you develop a good working relationship, where you understand how to ask them questions effectively, and how to work through any issues in communication that might arise.

This collaborator’s name is R.

When you send commands to R, you get a response back.

Sometimes, when you make mistakes, you will get back a nice, informative

error message or warning. However, sometimes the warnings seem to

reference a much “deeper” level of R than you’re familiar

with. Or, even worse, you may get the wrong answer with no warning

because the command you sent is perfectly valid, but isn’t what you

actually want. While you may first have some success working with

R by memorizing certain commands or reusing other scripts,

this is akin to using a collection of tourist phrases or pre-written

statements when having a conversation. You might make a mistake (like

getting directions to the library when you need a bathroom), and you are

going to be limited in your flexibility (like furiously paging through a

tourist guide looking for the term for “thrift store”).

This is all to say that we are going to spend a bit of time digging

into some of the more fundamental aspects of the R

language, and these concepts may not feel as immediately useful as, say,

learning to make plots with ggplot2. However, learning

these more fundamental concepts will help you develop an understanding

of how R thinks about data and code, how to interpret error

messages, and how to flexibly expand your skills to new situations.

R does not involve lots of pointing and clicking, and

that’s a good thing

Since R is a programming language, the results of your

analysis do not rely on remembering a succession of pointing and

clicking, but instead on a series of written commands, and that’s a good

thing! So, if you want to redo your analysis because you collected more

data, you don’t have to remember which button you clicked in which order

to obtain your results; you just have to run your script again.

Working with scripts makes the steps you used in your analysis clear, and the code you write can be inspected by someone else who can give you feedback and spot mistakes.

Working with scripts forces you to have a deeper understanding of what you are doing, and facilitates your learning and comprehension of the methods you use.

R code is great for reproducibility

Reproducibility is when someone else (including your future self) can obtain the same results from the same dataset when using the same analysis.

R integrates with other tools to generate manuscripts

from your code. If you collect more data, or fix a mistake in your

dataset, the figures and the statistical tests in your manuscript are

updated automatically.

An increasing number of journals and funding agencies expect analyses

to be reproducible, so knowing R will give you an edge with

these requirements.

R is interdisciplinary and extensible

With tens of thousands of packages that can be installed to extend

its capabilities, R provides a framework that allows you to

combine statistical approaches from many scientific disciplines to best

suit the analytical framework you need to analyze your data. For

instance, R has packages for image analysis, GIS, time

series, population genetics, and a lot more.

R works on data of all shapes and sizes

The skills you learn with R scale easily with the size

of your dataset. Whether your dataset has hundreds or millions of lines,

it won’t make much difference to you.

R is designed for data analysis. It comes with special

data structures and data types that make handling of missing data and

statistical factors convenient.

R can read data from many different file types,

including geospatial data, and connect to local and remote

databases.

R produces high-quality graphics

R has well-developed plotting capabilities, and the

ggplot2 package is one of, if not the most powerful pieces

of plotting software available today. We can learn to use

ggplot2 in future workshops if you wish.

R has a large and welcoming community

Thousands of people use R daily. Many of them are willing to help you

through mailing lists and websites such as Stack Overflow, or on

the RStudio community.

Since R is very popular among researchers, most of the

help communities and learning materials are aimed towards other

researchers. Python is a similar language to

R, and can accomplish many of the same tasks, but is widely

used by software developers and software engineers, so

Python resources and communities are not as oriented

towards researchers.

Navigating RStudio

We will use the RStudio integrated development

environment (IDE) to write code into scripts, run code in

R, navigate files on our computer, inspect objects we create in

R, and look at the plots we make. RStudio has many other

features that can help with things like version control, developing

R packages, and writing Shiny apps, but we

won’t cover those in this workshop.

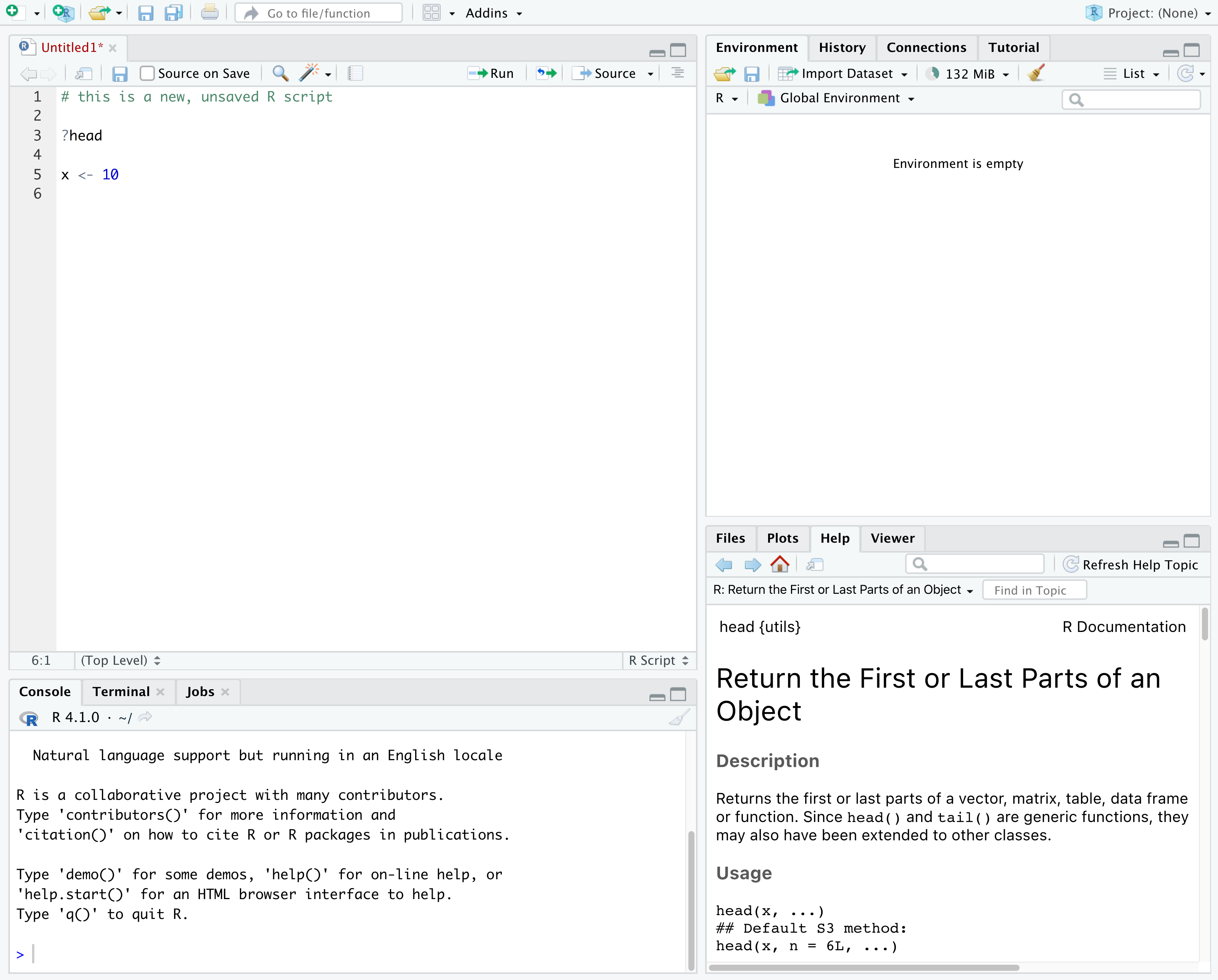

In the above screenshot, we can see 4 “panes” in the default layout:

- Top-Left: the

Sourcepane that displays scripts and other files.- If you only have 3 panes, and the Console pane is in the top left,

press

Shift+Cmd+N(Mac) orShift+Ctrl+N(WindowsorLinux) to open a blankRscript, which should make theSourcepane appear.

- If you only have 3 panes, and the Console pane is in the top left,

press

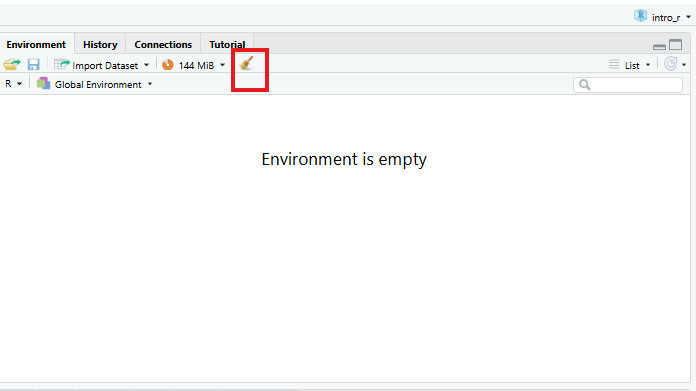

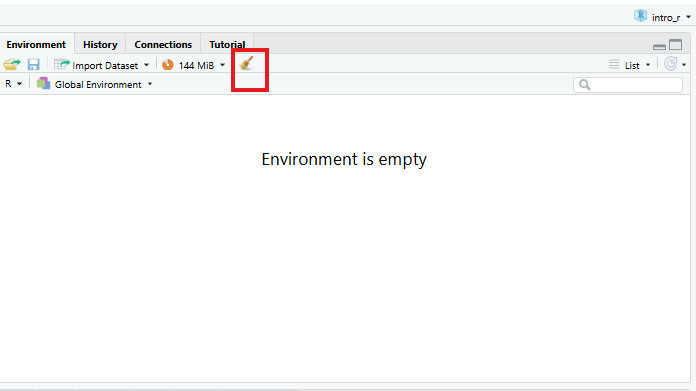

- Top-Right: the

Environment/Historypane, which shows all the objects in your current R session (Environment) and your command history (History)- there are some other tabs here, including

Connections,Build,Tutorial, and possiblyGit - we won’t cover any of the other tabs, but

RStudiohas lots of other useful features

- there are some other tabs here, including

- Bottom-Left: the

Consolepane, where you can interact directly with anRconsole, which interpretsRcommands and prints the results- There are also tabs for

TerminalandJobs

- There are also tabs for

- Bottom-Right: the

Files/Plots/Help/Viewerpane to navigate files or view plots and help pages

You can customize the layout of these panes, as well as many settings

such as RStudio color scheme, font, and even keyboard

shortcuts. You can access these settings by going to the menu bar, then

clicking on Tools → Global Options.

RStudio puts most of the things you need to work in

R into a single window, and also includes features like

keyboard shortcuts, autocompletion of code, and syntax highlighting

(different types of code are colored differently, making it easier to

navigate your code).

Getting set up in RStudio

It is a good practice to organize your projects into self-contained folders right from the start, so we will start building that habit now. A well-organized project is easier to navigate, more reproducible, and easier to share with others. Your project should start with a top-level folder that contains everything necessary for the project, including data, scripts, and images, all organized into sub-folders.

RStudio provides a Projects feature that

can make it easier to work on individual projects in R. We

will create a project that we will keep everything for this

workshop.

- Start

RStudio(you should see a view similar to the screenshot above). - In the top right, you will see a blue 3D cube and the words

Project: (None). Click on this icon. - Click

New Projectfrom the dropdown menu. - Click

New Directory, thenNew Project - Type out a name for the project, such as

intro_r - Put it in a convenient location using the

Create project as a subdirectory of:section. We recommend yourDesktop. You can always move the project somewhere else later, because it will be self-contained. - Click

Create Projectand your new project will open.

Next time you open RStudio, you can click that 3D cube

icon, and you will see options to open existing projects, like the one

you just made.

One of the benefits to using RStudio Projects is that they

automatically set the working directory to the

top-level folder for the project. The working directory is the folder

where R is working, so it views the location of all files (including

data and scripts) as being relative to the working directory. You may

come across scripts that include something like

setwd("/Users/YourUserName/MyCoolProject"), which directly

sets a working directory. This is usually much less portable, since that

specific directory might not be found on someone else’s computer (they

probably don’t have the same username as you). Using

RStudio Projects means we don’t have to deal with manually

setting the working directory.

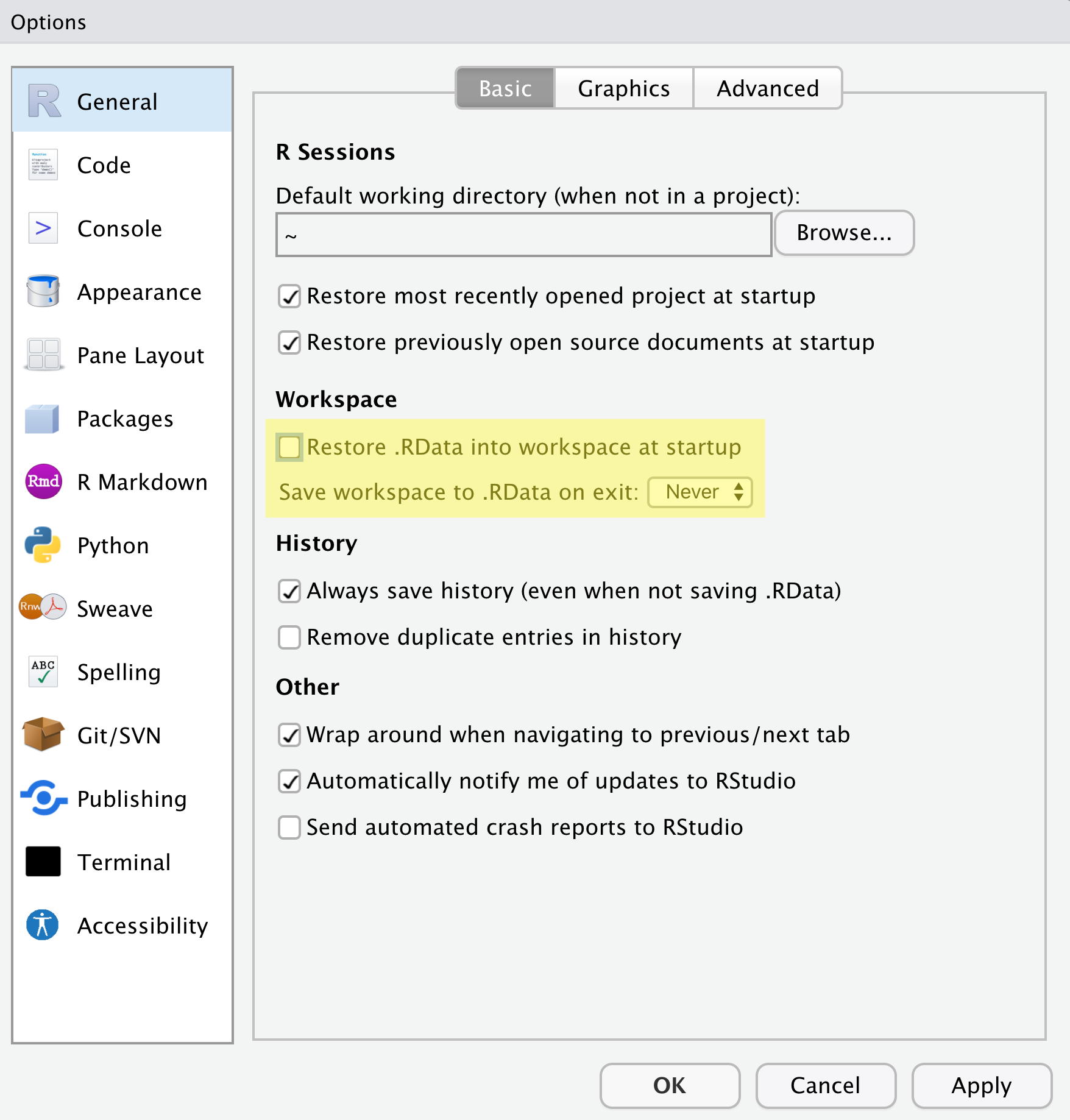

There are a few settings we will need to adjust to improve the

reproducibility of our work. Go to your menu bar, then click

Tools → Global Options to open up the Options

window.

Make sure your settings match those highlighted in yellow. We don’t

want RStudio to store the current status of our R session

and reload it the next time we startR. This might sound

convenient, but for the sake of reproducibility, we want to start with a

clean, empty R session every time we work. That means that we have to

record everything we do into scripts, save any data we need into files,

and store outputs like images as files. We want to get used to

everything we generate in a single R session being

disposable. We want our scripts to be able to regenerate things

we need, other than “raw materials” like data.

Organizing your project directory

Using a consistent folder structure across all your new projects will help keep a growing project organized, and make it easy to find files in the future. This is especially beneficial if you are working on multiple projects, since you will know where to look for particular kinds of files.

We will use a basic structure for this workshop, which is often a good place to start, and can be extended to meet your specific needs. Here is a diagram describing the structure that we will end up with as we progress through the workshops:

intro_r

│

└── scripts

│

└── data

│ └── cleaned

│ └── raw

│

└─── images

│

└─── documentsWithin our project folder (intro_r), we first have a

scripts folder to hold any scripts we write. We will also

have a data folder containing cleaned and

raw subfolders. In general, you want to keep your

raw data completely untouched, so once you put data into

that folder, you do not modify it. Instead, you read it into R, and if

you make any modifications, you write that modified file into the

cleaned folder. We also have an images folder

for plots we make, and a documents folder for any other

documents you might produce.

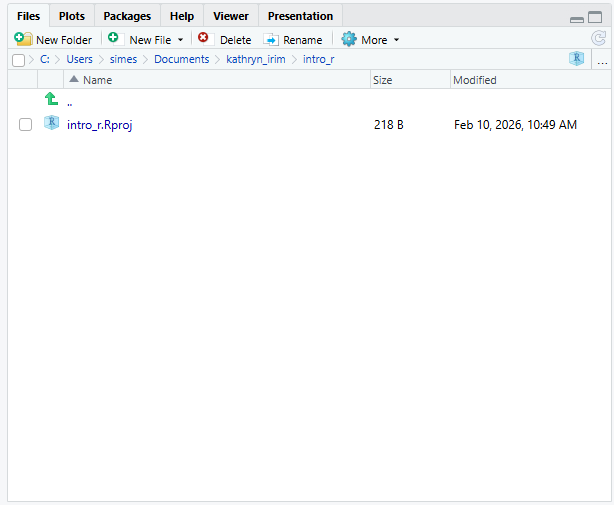

Let’s start making our new script folder. Go to the

Files pane (bottom right), and check the

current directory. You should be in the directory for the project you

just made, in our case intro_r. You shouldn’t see any

folders in here yet.

Next, click the New Folder button, and

type in scripts to generate your scripts

folder. It should appear in the Files list now. It’s worth noting that

the Files pane helps you create, find, and

open files, but moving through your files won’t change where the

working directory of your project is.

Working in R and RStudio

The basis of programming is that we write down instructions for the computer to follow, and then we tell the computer to follow those instructions. We write these instructions in the form of code, which is a common language that is understood by the computer and humans (after some practice). We call these instructions commands, and we tell the computer to follow the instructions by running (also called executing) the commands.

Console vs. script

You can run commands directly in the R console, or you

can write them into an R script. It may help to think of

working in the console vs. working in a script as something like

cooking. The console is like making up a new recipe, but not writing

anything down. You can carry out a series of steps and produce a nice,

tasty dish at the end. However, because you didn’t write anything down,

it’s harder to figure out exactly what you did, and in what order.

Writing a script is like taking nice notes while cooking- you can tweak and edit the recipe all you want, you can come back in 6 months and try it again, and you don’t have to try to remember what went well and what didn’t. It’s actually even easier than cooking, since you can hit one button and the computer “cooks” the whole recipe for you!

An additional benefit of scripts is that you can leave

comments for yourself or others to read. Lines that

start with # are considered comments and will not be

interpreted as R code.

Console

- The

Rconsole is where code is run/executed - The prompt, which is the

>symbol, is where you can type commands - By pressing

Enter,Rwill execute those commands and print the result. - You can work here, and your history is saved in the

Historypane, but you can’t access it in the future

Script

- You can make a new

Rscript by clickingFile → New File → R Script, clicking the green+button in the top left corner ofRStudio, or pressingShift+Cmd+N(Mac) orShift+Ctrl+N(WindowsandLinux). It will be unsaved, and calledUntitled1 - If you type out lines of

Rcode in a script, you can send them to theRconsole to be evaluated-

Cmd+Enter(Mac) orCtrl+Enter(WindowsandLinux) will run the line of code that your cursor is on - If you highlight multiple lines of code, you can run all of them by

pressing

Cmd+Enter(Mac) orCtrl+Enter(WindowsandLinux) - By preserving commands in a script, you can edit and rerun them quickly, save them for later, and share them with others

- You can leave comments for yourself by starting a line with a

#

-

Example

Let’s try running some code in the console and in a script. First,

click down in the Console pane, and type out 1+1. Hit

Enter to run the code. You should see your

code echoed, and then the value of 2 returned.

Now click into your blank script, and type out 1+1. With

your cursor on that line, hit Cmd+Enter

(Mac) or Ctrl+Enter

(Windows and Linux) to run the code. You will

see that your code was sent from the script to the console, where it

returned a value of 2, just like when you ran your code

directly in the console.

-

Ris a programming language and software used to run commands in that language -

RStudiois software to make it easier to write and run code inR - Use

R Projectsto keep your work organized and self-contained - Write your code in scripts for reproducibility and portability

Content from Introduction to R Packages, Markdown and Notebooks

Last updated on 2026-04-23 | Edit this page

Overview

Questions

- What is an

R package? - How to install

Rpackages? - What is

R MarkdownandR Notebooks? - How can I integrate my

Rcode with text and plots? - How can I convert

.Rmdfiles to.html?

Objectives

- Understand what an

R packageis - Install packages using the

packagestab. - Install packages using

Rcode. - Understand basic syntax of

R MarkdownandR Notebooks

Acknowledgement

This workshop was adapted using material from the Data Carpentry

lessons R for Social Scientists,

specifically lesson 00-intro

and lesson 06-rmarkdown.

Other Materials

What are R packages?

R Packages are the

fundamental units of reproducible R code. They are

collections of reusable R functions, sample data, and the

documentation that describes how to use the functions.

What is the difference between base R and

packages?

The base R package

contains the basic functions which let R function as a

language:

- Arithmetic

- Input/output

- Basic programming support, etc

The R software is distributed with the

base R package installed. In addition to the

base R installation, there are in excess of 20,000

additional packages which can be used to extend the functionality of

R. Many of these have been written by R users

and have been made available in central repositories, like the one

hosted at the Comprehensive R Archive Network CRAN,

for anyone to download and install into their own R

environment.

CRAN

is a network of ftp and web servers around the world that store

identical, up-to-date, versions of code and documentation for

R.

Installing packages using R code and the

packages tab

We’ll use the tidyverse and here packages

in this workshop.

You can install these packages from the console by typing the command

install.packages(), or from the packages

tab.

We’ll install tidyverse from the console, and

here from the packages tab.

R

install.packages("tidyverse")

OUTPUT

The following package(s) will be installed:

- tidyverse [2.0.0]

These packages will be installed into "/__w/irim-r-workshops/irim-r-workshops/renv/profiles/lesson-requirements/renv/library/linux-ubuntu-noble/R-4.5/x86_64-pc-linux-gnu".

# Installing packages --------------------------------------------------------

[32m✔[0m tidyverse 2.0.0 [linked from cache]

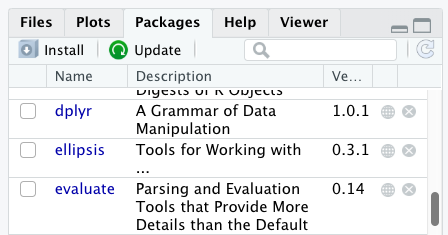

Successfully installed 1 package in 3.6 milliseconds.You can see if you have a package installed by looking in the

packages tab (on the lower-right by default). You can also

type the command installed.packages() into the console and

examine the output.

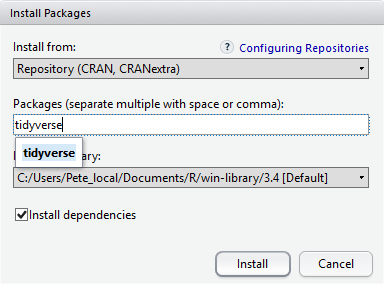

Packages can also be installed from the packages tab. On

the packages tab, click the Install icon and

start typing the name of the package you want in the text box. As you

type, packages matching your starting characters will be displayed in a

drop-down list so that you can select them.

At the bottom of the Install Packages window is a check

box to Install dependencies. This is ticked by default,

which is usually what you want. Packages can (and do) make use of

functionality built into other packages, so for the functionality

contained in the package you are installing to work properly, there may

be other packages which have to be installed with them. The

Install dependencies option makes sure that this

happens.

Exercise

Use the Packages tab to confirm that you have both the

tidyverse and here packages installed.

Scroll through packages tab down to tidyverse. You can

also type a few characters into the searchbox. The

tidyverse package is really a package of packages,

including ggplot2 and dplyr, both of which

require other packages to run correctly. All of these packages will be

installed automatically. Depending on what packages have previously been

installed in your R environment, the install of tidyverse

could be very quick or could take several minutes. As the install

proceeds, messages relating to its progress will be written to the

console. You will be able to see all of the packages which are actually

being installed.

Because the install process accesses the CRAN

repository, you will need an Internet connection to install

packages.

It is also possible to install packages from other repositories, as

well as Github or the local file system, but we won’t be

looking at these options in this workshop.

R Markdown and R Notebooks

R Markdown is a flexible type of document that allows

you to seamlessly combine executable R code, and its

output, with text in a single document.

An R Notebook is a specific interactive execution mode

for an R Markdown (Rmd) document. Code chunks

are executed independently and interactively within the

RStudio editor.

R Markdown documents can be readily converted to

multiple static and dynamic output formats, including PDF (.pdf), Word

(.docx), and HTML (.html).

The benefit of a well-prepared R Markdown or

Notebook document is full reproducibility. This also means

that, if you notice a data transcription error, or you are able to add

more data to your analysis, you will be able to recompile the report

without making any changes in the actual document.

Creating an R Notebook file

To create a new R Markdown document in

RStudio, click

File -> New File -> R Notebook. You may be prompted

to install required packages the first time you do this.

Basic components of an R Notebook

YAML Header

To control the output, a YAML (YAML Ain’t

Markup Language) header is needed:

---

title: "My Awesome Report"

output: html_document

---The header is defined by the three hyphens at the beginning

(---) and the three hyphens at the end

(---).

In the YAML, the only required field is the

output:, which specifies the type of output you want. This

can be an html_document, a pdf_document, or a

word_document. We will start with an HTML document and

discuss the other options later.

After the header, to begin the body of the document, you start typing

after the end of the YAML header (i.e. after the second

---).

Markdown syntax

Markdown is a popular markup language that allows you to

add formatting elements to text, such as bold,

italics, and code. The formatting will not be

immediately visible in a markdown (.md) document, like you would see in

a Word document. Rather, you add Markdown syntax to the

text, which can then be converted to various other files that can

translate the Markdown syntax. Markdown is

useful because it is lightweight, flexible, and platform

independent.

RStudio provides a real time preview of the formatting-

click the Visual tab to view the rendered

Markdown, or Source to view the raw

Markdown.

Headings

A # in front of text indicates to Markdown

that this text is a heading. Adding more #s make the

heading smaller, i.e. one # is a first level heading, two

##s is a second level heading, etc. up to the 6th level

heading.

# Title

## Section

### Sub-section

#### Sub-sub section

##### Sub-sub-sub section

###### Sub-sub-sub-sub section(only use a level if the one above is also in use)

Formatting

You can make things bold by surrounding the word

with double asterisks, **bold**, or double underscores,

__bold__; and italicize using single asterisks,

*italics*, or single underscores,

_italics_.

You can also combine bold and italics to

write something really important with

triple-asterisks, ***really***, or underscores,

___really___; and, if you’re feeling bold (pun intended),

you can also use a combination of asterisks and underscores,

**_really_**, **_really_**.

To create code-type font, surround the word with

backticks, `code-type`.

Code Chunks

Code chunks are blocks where you write and execute R code. They start

with ```{r} and end with ```.

To insert a Chunk, click the small arrow next to the

Insert button in the editor toolbar and select

R.

To run a Chunk, click the small green play arrow on the right side of

the chunk, or use the keyboard shortcut

Ctrl+Alt+I on Windows and

Linux (or Cmd+Option+I on

Mac).

Render and Share Your Notebook

Once your analysis is complete, you can generate a final, polished report.

Click the Preview (or Render) button in the

RStudio editor toolbar.

This creates a self-contained HTML file (or PDF/Word document,

depending on your settings in your YAML header) that

includes both the narrative text and the final results.

You can easily share this output file with others, even if they don’t

use R.

Now that we’ve learned a couple of things, it might be useful to implement them.

Create your own new R Notebook

Start by opening a new R Notebook:

Click File -> New File -> R Notebook

When you open a new R Notebook, some explanatory text is

provided. This can be deleted so you can enter your own text and

code.

Download data

We will be using a dataset called SAFI_clean.csv. The

direct download link for this file is: https://github.com/datacarpentry/r-socialsci/blob/main/episodes/data/SAFI_clean.csv.

This data is a slightly cleaned up version of the

SAFI Survey Results available on figshare.

First, we need to create a new folder called data to

store this dataset. Go to the Files pane, and create a new folder named

data, and two subfolders called cleaned and

raw.

intro_r

│

└── scripts

│

└── data

│ └── cleaned

│ └── raw

│

└─── images

│

└─── documentsYou can either download the SAFI_clean.csv dataset used

for this workshop from the GitHub link or with R. You can

download the file from this GitHub link

and save it as SAFI_clean.csv in the data/raw

directory you just created. Or you can do this directly from

R by copying and pasting this in your console:

download.file( "https://raw.githubusercontent.com/datacarpentry/r-socialsci/main/episodes/data/SAFI_clean.csv", "data/raw/SAFI_clean.csv", mode = "wb" )

Start an Introduction section

Make a header called Introduction, and insert some

explanatory text about the dataset that will be in your report. For

example:

This report uses the tidyverse package

along with the SAFI dataset, which has columns

that include:

- village

- interview_date

- no_members

- years_liv

- respondent_wall_type

- roomsYou can also create an ordered list using numbers:

1. village

2. interview_date

3. no_members

4. years_liv

5. respondent_wall_type

6. roomsAnd nested items by tab-indenting:

- village

- Name of village

- interview_date

- Date of interview

- no_members

- How many family members lived in a house

- years_liv

- How many years respondent has lived in village or neighbouring

village

- respondent_wall_type

- Type of wall of house

- rooms

- Number of rooms in houseFor more Markdown syntax see the following reference guide.

Now we can render the document into HTML by clicking the

preview button in the top of the Source

pane (top left). If you haven’t saved the document yet, you will be

prompted to do so when you preview for the

first time.

Writing an R Markdown report

Now we will add some R code to demonstrate (we will

learn more about this code in the next workshop!).

First, we need to make sure tidyverse

is loaded. It is not enough to load

tidyverse from the console, we will need

to load it within our R Notebook. The same applies to our

data. To load these, we will need to create a ‘code chunk’ at the top of

our document (below the YAML header).

A code chunk can be inserted by clicking

Code \> Insert Chunk, or by using the keyboard shortcuts

Ctrl+Alt+I on Windows and

Linux, and Cmd+Option+I on

Mac.

The syntax of a code chunk is:

An R Markdown document knows that this text is not part

of the report from the (```) that begins and ends the

chunk. It also knows that the code inside of the chunk is R code from

the r inside of the curly braces ({}). After

the r you can add a name for the code chunk . Naming a

chunk is optional, but recommended. Each chunk name must be unique, and

only contain alphanumeric characters and -.

To load tidyverse and our

SAFI_clean.csv file, we will insert a chunk and call it

‘setup’. Since we don’t want this code or the output to show in our

rendered HTML document, we add an include = FALSE option

after the code chunk name ({r setup, include = FALSE}).

MARKDOWN

```{r setup, include = FALSE}

library(tidyverse)

library(here)

interviews <- read_csv(here("data/raw/SAFI_clean.csv"), na = "NULL")

```Important Note!

The file paths you give in a .Rmd document, e.g. to load a .csv file, are relative to the .Rmd document, not the project root.

We highly recommend the use of the here() function to

keep the file paths consistent within your project.

Insert table

Next, we will create a table which shows the average household size

grouped by village and memb_assoc. We can do

this by creating a new code chunk and calling it ‘interview-tbl’. Or,

you can come up with something more creative (just remember to stick to

the naming rules).

We will learn more about this code later!

To see the output, run the code chunk with the green triangle in the

top right corner of the the chunk, or with the keyboard shortcuts:

Ctrl+Alt+C on Windows and

Linux, or Cmd+Option+C on

Mac.

To make sure the table is formatted nicely in our output document, we

will need to use the kable() function from the

knitr package. The kable()

function takes the output of your R code and knits it into a nice

looking HTML table. You can also specify different aspects of the table,

e.g. the column names, a caption, etc.

Run the code chunk to make sure you get the desired output.

R

interviews %>%

filter(!is.na(memb_assoc)) %>%

group_by(village, memb_assoc) %>%

summarize(mean_no_membrs = mean(no_membrs)) %>%

knitr::kable(caption = "We can also add a caption.",

col.names = c("Village", "Member Association",

"Mean Number of Members"))

| Village | Member Association | Mean Number of Members |

|---|---|---|

| Chirodzo | no | 8.062500 |

| Chirodzo | yes | 7.818182 |

| God | no | 7.133333 |

| God | yes | 8.000000 |

| Ruaca | no | 7.178571 |

| Ruaca | yes | 9.500000 |

Many different R packages can be used to generate

tables. Some of the more commonly used options are listed in the table

below.

| Name | Creator(s) | Description |

|---|---|---|

| condformat | Oller Moreno (2022) | Apply and visualize conditional formatting to data frames in R. It renders a data frame with cells formatted according to criteria defined by rules, using a tidy evaluation syntax. |

| DT | Xie et al. (2023) | Data objects in R can be rendered as HTML tables using the JavaScript library ‘DataTables’ (typically via R Markdown or Shiny). The ‘DataTables’ library has been included in this R package. |

| formattable | Ren and Russell (2021) | Provides functions to create formattable vectors and data frames. ‘Formattable’ vectors are printed with text formatting, and formattable data frames are printed with multiple types of formatting in HTML to improve the readability of data presented in tabular form rendered on web pages. |

| flextable | Gohel and Skintzos (2023) | Use a grammar for creating and customizing pretty tables. The following formats are supported: ‘HTML’, ‘PDF’, ‘RTF’, ‘Microsoft Word’, ‘Microsoft PowerPoint’ and R ‘Grid Graphics’. ‘R Markdown’, ‘Quarto’, and the package ‘officer’ can be used to produce the result files. |

| gt | Iannone et al. (2022) | Build display tables from tabular data with an easy-to-use set of functions. With its progressive approach, we can construct display tables with cohesive table parts. Table values can be formatted using any of the included formatting functions. |

| huxtable | Hugh-Jones (2022) | Creates styled tables for data presentation. Export to HTML, LaTeX, RTF, ‘Word’, ‘Excel’, and ‘PowerPoint’. Simple, modern interface to manipulate borders, size, position, captions, colours, text styles and number formatting. |

| pander | Daróczi and Tsegelskyi (2022) | Contains some functions catching all messages, ‘stdout’ and other useful information while evaluating R code and other helpers to return user specified text elements (e.g., header, paragraph, table, image, lists etc.) in ‘pandoc’ markdown or several types of R objects similarly automatically transformed to markdown format. |

| pixiedust | Nutter and Kretch (2021) | ‘pixiedust’ provides tidy data frames with a programming interface intended to be similar to ’ggplot2’s system of layers with fine-tuned control over each cell of the table. |

| reactable | Lin et al. (2023) | Interactive data tables for R, based on the ‘React Table’ JavaScript library. Provides an HTML widget that can be used in ‘R Markdown’ or ‘Quarto’ documents, ‘Shiny’ applications, or viewed from an R console. |

| rhandsontable | Owen et al. (2021) | An R interface to the ‘Handsontable’ JavaScript library, which is a minimalist Excel-like data grid editor. |

| stargazer | Hlavac (2022) | Produces LaTeX code, HTML/CSS code and ASCII text for well-formatted tables that hold regression analysis results from several models side-by-side, as well as summary statistics. |

| tables | Murdoch (2022) | Computes and displays complex tables of summary statistics. Output may be in LaTeX, HTML, plain text, or an R matrix for further processing. |

| tangram | Garbett et al. (2023) | Provides an extensible formula system to quickly and easily create production quality tables. The processing steps are a formula parser, statistical content generation from data defined by a formula, and rendering into a table. |

| xtable | Dahl et al. (2019) | Coerce data to LaTeX and HTML tables. |

| ztable | Moon (2021) | Makes zebra-striped tables (tables with alternating row colors) in LaTeX and HTML formats easily from a data.frame, matrix, lm, aov, anova, glm, coxph, nls, fitdistr, mytable and cbind.mytable objects. |

Customizing chunk output

We mentioned using include = FALSE in a code chunk to

prevent the code and output from printing in the knitted document. There

are additional options available to customize how the code-chunks are

presented in the output document. The options are entered in the code

chunk after chunk-name and separated by commas, e.g.

{r chunk-name, eval = FALSE, echo = TRUE}.

| Option | Options | Output |

|---|---|---|

eval |

TRUE or FALSE

|

Whether or not the code within the code chunk should be run. |

echo |

TRUE or FALSE

|

Choose if you want to show your code chunk in the output document.

echo = TRUE will show the code chunk. |

include |

TRUE or FALSE

|

Choose if the output of a code chunk should be included in the

document. FALSE means that your code will run, but will not

show up in the document. |

warning |

TRUE or FALSE

|

Whether or not you want your output document to display potential warning messages produced by your code. |

message |

TRUE or FALSE

|

Whether or not you want your output document to display potential messages produced by your code. |

fig.align |

default, left, right,

center

|

Where the figure from your R code chunk should be output on the page |

Exercise

Play around with the different options in the chunk with the code for the table, and see what each option does to the output.

What happens if you use eval = FALSE and

echo = FALSE? What is the difference between this and

include = FALSE?

Create a chunk with {r eval = FALSE, echo = FALSE}, then

create another chunk with {r include = FALSE} to compare.

eval = FALSE and echo = FALSE will neither run

the code in the chunk, nor show the code in the knitted document. The

code chunk essentially doesn’t exist in the rendered document as it was

never run. Whereas include = FALSE will run the code and

store the output for later use.

In-line R code

Now we will use some in-line R code to present some

descriptive statistics. To use in-line R code, we use the

same backticks that we used in the Markdown section, with

an r to specify that we are generating R-code. The

difference between in-line code and a code chunk is the number of

backticks. In-line R code uses one backtick

(`r`), whereas code chunks use three backticks

(```r```).

For example, today’s date is `r Sys.Date()`, will be

rendered as: today’s date is 2026-04-23.

The code will display today’s date in the output document (well,

technically the date the document was last knitted or previewed).

The best way to use in-line R code, is to minimize the amount of code you need to produce the in-line output by preparing the output in code chunks. Let’s say we’re interested in presenting the average household size in a village.

R

# create a summary data frame with the mean household size by village

mean_household <- interviews %>%

group_by(village) %>%

summarize(mean_no_membrs = mean(no_membrs))

# and select the village we want to use

mean_chirodzo <- mean_household %>%

filter(village == "Chirodzo")

Now we can make an informative statement on the means of each village, and include the mean values as in-line R-code. For example:

The average household size in the village of Chirodzo is

`r round(mean_chirodzo$mean_no_membrs, 2)`

becomes…

The average household size in the village of Chirodzo is 7.08.

Because we are using in-line R code instead of the

actual values, we have created a dynamic document that will

automatically update if we make changes to the dataset and/or code

chunks.

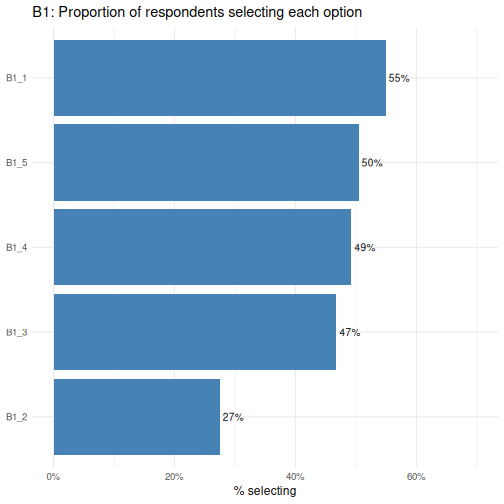

Plots

Finally, we will also include a plot, so our document is a little more colourful and a little less boring. We will create some code to use in the plotting.

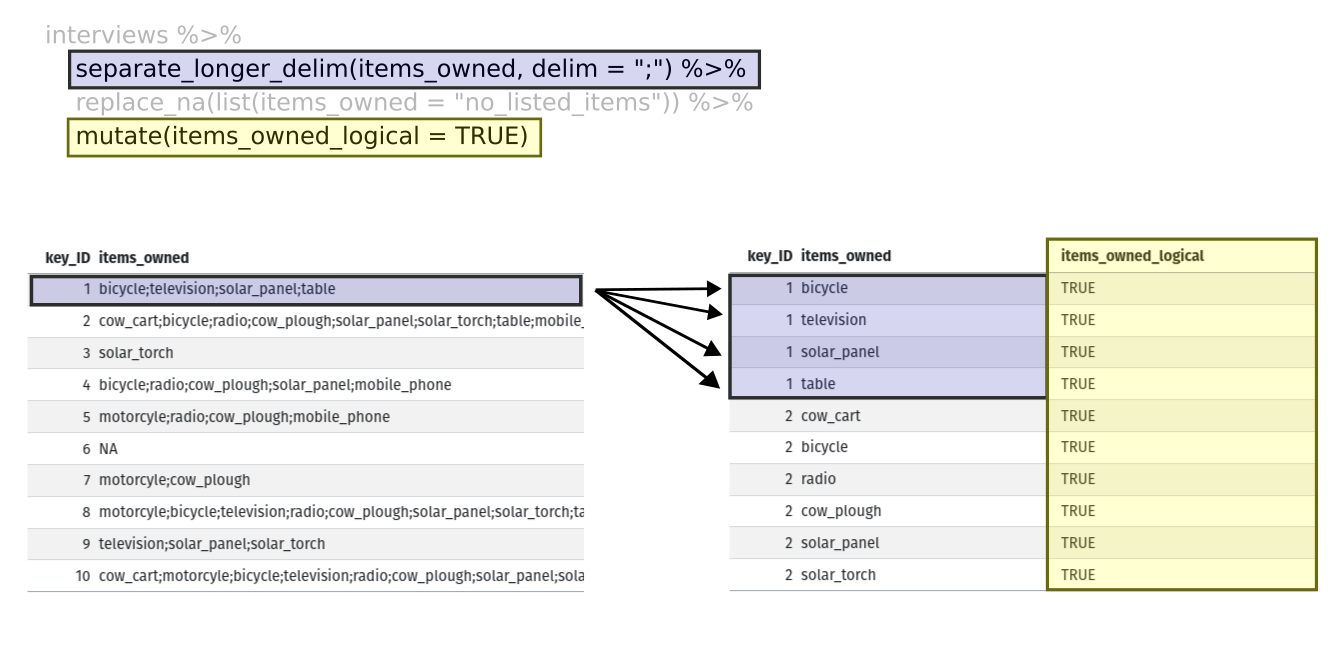

R

interviews_plotting <- interviews %>%

## pivot wider by items_owned

separate_rows(items_owned, sep = ";") %>%

## if there were no items listed, changing NA to no_listed_items

replace_na(list(items_owned = "no_listed_items")) %>%

mutate(items_owned_logical = TRUE) %>%

pivot_wider(names_from = items_owned,

values_from = items_owned_logical,

values_fill = list(items_owned_logical = FALSE)) %>%

## pivot wider by months_lack_food

separate_rows(months_lack_food, sep = ";") %>%

mutate(months_lack_food_logical = TRUE) %>%

pivot_wider(names_from = months_lack_food,

values_from = months_lack_food_logical,

values_fill = list(months_lack_food_logical = FALSE)) %>%

## add some summary columns

mutate(number_months_lack_food = rowSums(select(., Jan:May))) %>%

mutate(number_items = rowSums(select(., bicycle:car)))

R

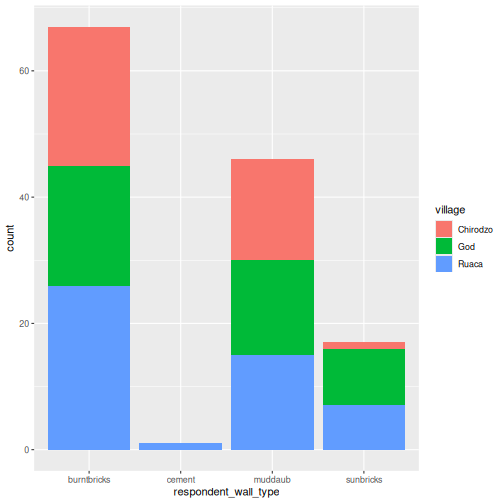

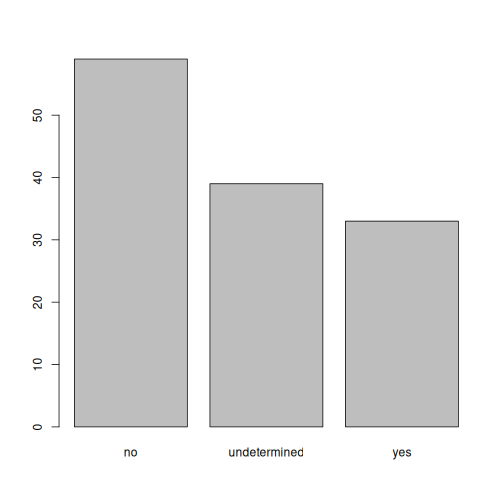

interviews_plotting %>%

ggplot(aes(x = respondent_wall_type)) +

geom_bar(aes(fill = village))

We can also create a caption with the chunk option

fig.cap.

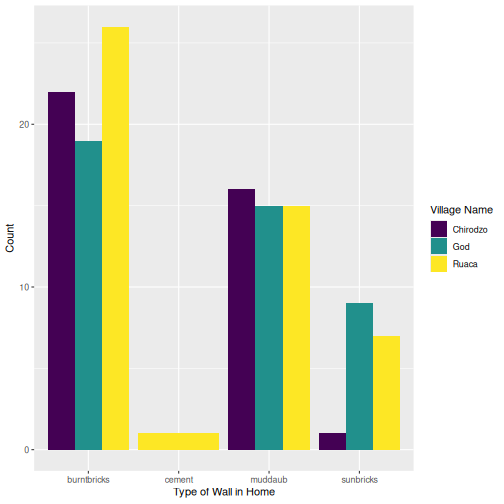

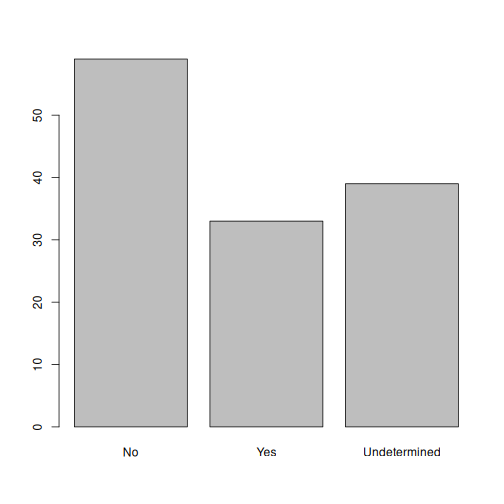

R

interviews_plotting %>%

ggplot(aes(x = respondent_wall_type)) +

geom_bar(aes(fill = village), position = "dodge") +

labs(x = "Type of Wall in Home", y = "Count", fill = "Village Name") +

scale_fill_viridis_d() # add colour deficient friendly palette

Other output options

You can convert R Markdown to a PDF or a Word document

(among others). Click the little triangle next to the

Preview button to get a drop-down menu. Or

you could put pdf_document or word_document in

the initial header of the file.

---

title: "My Awesome Report"

author: "Author name"

date: ""

output: word_document

---Note: Creating PDF documents

Creating .pdf documents may require installation of some

extra software. The R package tinytex provides some tools

to help make this process easier for R users. With tinytex

installed, run tinytex::install_tinytex() to install the

required software (you’ll only need to do this once) and then when you

Knit to pdf tinytex will

automatically detect and install any additional LaTeX packages that are

needed to produce the pdf document. Visit the tinytex website for

more information.

Note: Inserting citations into an

R Markdown file

It is possible to insert citations into an R Markdown

file using the editor toolbar. The editor toolbar includes commonly seen

formatting buttons generally seen in text editors (e.g., bold and italic

buttons). The toolbar is accessible by using the settings dropdown menu

(next to the Preview dropdown menu) to select

Use Visual Editor, also accessible through the shortcut

Crtl+Shift+F4. From here, clicking

Insert allows Citation to be selected

(shortcut: Crtl+Shift+F8). For example,

searching 10.1007/978-3-319-24277-4 in

From DOI and inserting will provide the citation for

ggplot2 [@wickham2016]. This will also save

the citation(s) in ‘references.bib’ in the current working directory.

Visit the R Studio website

for more information. Tip: obtaining citation information from relevant

packages can be done by using citation("package").

Resources

R MarkdowndocumentationR Markdown cheat sheetGetting started with R MarkdownIntroduction to R Markdown-

R Markdown: The Definitive Guide(book byRstudioteam)

- Use

install.packages()to install packages (libraries) - Use

library()to load packages -

R Markdownis a useful language for creating reproducible documents combining text and executableRcode - Specify chunk options to control formatting of the output document

Content from Starting with Data

Last updated on 2026-04-23 | Edit this page

Overview

Questions

- How does

Rstore data? - What is a data.frame?

- How can I read a complete

.csvfile intoR? - How can I get basic summary information about my dataset?

- How can I change the way

Rtreats strings in my dataset? - Why would I want strings to be treated differently?

- How are dates represented in

Rand how can I change the format?

Objectives

- Load external data from a

.csvfile into a data frame. - Explore the structure and content of data.frames

- Understand how

Rassigns values to objects - Understand vector types and missing data

- Describe the difference between a factor and a string.

- Create and convert factors

- Examine and change date formats.

Acknowledgement

This workshop was adapted using material from the Data Carpentry

lessons R for Social Scientists,

specifically lesson 02-starting-with-data,

and R for Ecologists,

specifically how-r-thinks-about-data.

Other Materials

Set up

Start by opening up your RStudio project that you

created in a previous workshop

(called intro_r). Open a new R Notebook:

Click File -> New File -> R Notebook. Save your

R Notebook with a filename that makes sense, such as

starting_with_data.Rmd, in the scripts

folder.

When you open a new R Notebook, some explanatory text is

provided. This can be deleted so you can enter your own text and

code.

What are data frames?

Data frames are the de facto data structure for tabular data

in R, and what we use for data processing, statistics, and

plotting.

A data frame is the representation of data in the format of a table

where the columns are vectors that all have the same length. Data frames

are analogous to the more familiar spreadsheet in programs such as

Excel, with one key difference. Because columns are

vectors, each column must contain a single type of data (e.g.,

characters, integers, factors). For example, here is a figure depicting

a data frame comprising a numeric, a character, and a logical

vector.

Data frames can be created by hand, but most commonly they are

generated by the functions read_csv() or

read_table(); in other words, when importing spreadsheets

from your hard drive (or the web). We will now demonstrate how to import

tabular data using read_csv().

Presentation of the SAFI Data

SAFI (Studying African Farmer-Led Irrigation) is a study

looking at farming and irrigation methods in Tanzania and Mozambique.

The survey data was collected through interviews conducted between

November 2016 and June 2017. For this lesson, we will be using a subset

of the available data. For information about the orginal dataset, see

the dataset description.

We will be using a subset of the dataset that has been provided

(data/raw/SAFI_clean.csv). In this dataset, the missing

data is encoded as NULL, each row holds information for a

single interview respondent, and the columns represent:

| column_name | description |

|---|---|

| key_id | Added to provide a unique Id for each observation. (The InstanceID field does this as well but it is not as convenient to use) |

| village | Village name |

| interview_date | Date of interview |

| no_membrs | How many members in the household? |

| years_liv | How many years have you been living in this village or neighboring village? |

| respondent_wall_type | What type of walls does their house have (from list) |

| rooms | How many rooms in the main house are used for sleeping? |

| memb_assoc | Are you a member of an irrigation association? |

| affect_conflicts | Have you been affected by conflicts with other irrigators in the area? |

| liv_count | Number of livestock owned. |

| items_owned | Which of the following items are owned by the household? (list) |

| no_meals | How many meals do people in your household normally eat in a day? |

| months_lack_food | Indicate which months, In the last 12 months have you faced a situation when you did not have enough food to feed the household? |

| instanceID | Unique identifier for the form data submission |

Download the data

If you did not previously downloaded the SAFI_clean.csv

dataset in the previous workshop,

please follow the instructions below to download it. If you already have

the file in your data/raw/ folder, jump to the

Importing data section.

We will be using a dataset called SAFI_clean.csv. The

direct download link for this file is: https://github.com/datacarpentry/r-socialsci/blob/main/episodes/data/SAFI_clean.csv.

This data is a slightly cleaned up version of the SAFI Survey Results

available on figshare.

First, we need to create a new folder called data to

store this dataset. Go to the Files pane, and create a new folder named

data, and two subfolders called cleaned and

raw.

intro_r

│

└── scripts

│

└── data

│ └── cleaned

│ └── raw

│

└─── images

│

└─── documentsYou can either download the SAFI_clean.csv dataset used

for this workshop from the GitHub link or with

R. You can download the file from this GitHub link

and save it as SAFI_clean.csv in the data/raw

directory you just created. Or you can do this directly from

R by copying and pasting this in your console:

download.file( "https://raw.githubusercontent.com/datacarpentry/r-socialsci/main/episodes/data/SAFI_clean.csv", "data/raw/SAFI_clean.csv", mode = "wb" )

Importing data

You are going to load the data in R’s memory using the

function read_csv() from the

readr package, which is part of the

tidyverse; learn more about the

tidyverse collection of packages here.

readr gets installed as part as the

tidyverse installation. When you load the

tidyverse

(library(tidyverse)), the core packages (the packages used

in most data analyses) get loaded, including

readr.

Before proceeding, however, this is a good opportunity to talk about

conflicts. Certain packages we load can end up introducing function

names that are already in use by pre-loaded R packages. For

instance, when we load the tidyverse package below, we will introduce

two conflicting functions: filter() and lag().

This happens because filter and lag are

already functions used by the stats package (already pre-loaded in

R). What will happen now is that if we, for example, call

the filter() function, R will use the

dplyr::filter() version and not the

stats::filter() one. This happens because, if conflicted,

by default R uses the function from the most recently

loaded package. Conflicted functions may cause you some trouble in the

future, so it is important that we are aware of them so that we can

properly handle them, if we want.

To do so, we just need the following functions from the conflicted package:

-

conflicted::conflict_scout(): Shows us any conflicted functions.

-

conflict_prefer("function", "package_prefered"): Allows us to choose the default function we want from now on.

It is also important to know that we can, at any time, just call the

function directly from the package we want, such as

stats::filter().

Even with the use of an RStudio project, it can be

difficult to learn how to specify paths to file locations. Enter the

here package! The here package creates

paths relative to the top-level directory (your RStudio project). These

relative paths work regardless of where the associated source

file lives inside your project, like analysis projects with data and

reports in different subdirectories. This is an important contrast to

using setwd(), which depends on the way you order your

files on your computer.

Before we can use the read_csv() and here()

functions, we need to load the tidyverse and

here packages.

Add a new code chunk in your notebook, load the

tidyverse and here packages, and read in the

SAFI dataset. We’ll assign the dataset to

an object called interviews.

If you recall, the missing data is encoded as NULL in

the dataset. We’ll tell this to the read_csv() function, so

R will automatically convert all the NULL

entries in the dataset into NA.

R

library(tidyverse)

library(here)

interviews <- read_csv(

here("data", "raw", "SAFI_clean.csv"),

na = "NULL")

In the above code, we notice the here() function takes

folder and file names as inputs (e.g., "data",

"SAFI_clean.csv"), each enclosed in quotations

("") and separated by a comma. The here() will

accept as many names as are necessary to navigate to a particular file

(e.g., here("data", "raw", "SAFI_clean.csv)).

The here() function can accept the folder and file names

in an alternate format, using a slash (“/”) rather than commas to

separate the names. The two methods are equivalent, so that

here("data", "raw", "SAFI_clean.csv") and

here("data/raw/SAFI_clean.csv") produce the same result.

(The slash is used on all operating systems; backslashes are not

used.)

Assigning objects

In R, we can assign inputs to a named

object. We do this using the assignment

arrow <-, Alt+-

(Windows and Linux) or

Option+- (Mac).What we are

doing here is taking the result of the code on the right side of the

arrow (reading in the csv file), and assigning it to an object whose

name is on the left side of the arrow (interviews).

You may notice that the contents of the interviews data frame do not

display below the code cell. This is because assignments

(<-) don’t display anything. If we want to check that

our data has been loaded, we can see the contents of the data frame by

typing its name: interviews into a new code chunk.

R

interviews

## Try also

## view(interviews)

## head(interviews)

OUTPUT

# A tibble: 131 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 121 more rows

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>Exploring data frames

When working with the output of a new function, it’s often a good

idea to check the class():

R

class(interviews)

OUTPUT

[1] "spec_tbl_df" "tbl_df" "tbl" "data.frame" Whoa! What is this thing? It has multiple

classesspec_tbl_df, tbl_df, tbl,

and data.frame? Well, it’s called a tibble,

and it is the tidyverse version of a data.frame. It

is a data.frame, but with some added perks. It prints out a

little more nicely, it highlights NA values and negative

values in red, and it will generally communicate with you more (in terms

of warnings and errors, which is a good thing).

As a tibble, the type of data included in each column is

listed in an abbreviated fashion below the column names. For instance,

here key_ID is a column of floating point numbers

(abbreviated <dbl> for the word ‘double’),

respondent_wall_type is a column of characters (

<chr>) and theinterview_date is a column

in the “date and time” format (<dttm>).

tidyverse vs. base R

As we begin to delve more deeply into the tidyverse, we

should briefly pause to mention some of the reasons for focusing on the

tidyverse set of tools. In R, there are often many ways to

get a job done, and there are other approaches that can accomplish tasks

similar to the tidyverse.

The phrase base R is used to refer to

approaches that utilize functions contained in R’s default

packages. We will use some base R functions, such as

str(), head(), and nrow(), and we

will be using more scattered throughout this workshop. However, there

are some key base R approaches we will not be teaching.

These include square bracket subsetting. You may come across code

written by other people that looks like

interviews[1:10, 2], which is a base R

command. If you’re interested in learning more about these approaches,

you can check out other Carpentries lessons like the Software Carpentry Programming with R

lesson.

We choose to teach the tidyverse set of packages because

they share a similar syntax and philosophy, making them consistent and

producing highly readable code. They are also very flexible and

powerful, with a growing number of packages designed according to

similar principles and to work well with the rest of the packages. The

tidyverse packages tend to have very clear documentation

and wide array of learning materials that tend to be written with novice

users in mind. Finally, the tidyverse has only continued to

grow, and has strong support from RStudio, which implies

that these approaches will be relevant into the future.

Note

read_csv() assumes that fields are delimited by commas.

However, in several countries, the comma is used as a decimal separator

and the semicolon (;) is used as a field delimiter. If you want to read

in this type of files in R, you can use the

read_csv2 function. It behaves exactly like

read_csv but uses different parameters for the decimal and

the field separators. If you are working with another format, they can

be both specified by the user. Check out the help for

read_csv() by typing ?read_csv to learn more.

There is also the read_tsv() for tab-separated data files,

and read_delim() allows you to specify more details about

the structure of your file.

Inspecting data frames

When calling a tbl_df object (like

interviews), there is already a lot of information about

our data frame being displayed such as the number of rows, the number of

columns, the names of the columns, and as we just saw the class of data

stored in each column. However, there are functions to extract this

information from data frames. Here is a non-exhaustive list of some of

these functions. Let’s try them out!

Size:

-

dim(interviews)- returns a vector with the number of rows as the first element, and the number of columns as the second element (thedimensions of the object) -

nrow(interviews)- returns the number of rows -

ncol(interviews)- returns the number of columns

Content:

-

head(interviews)- shows the first 6 rows -

tail(interviews)- shows the last 6 rows

Names:

-

names(interviews)- returns the column names (synonym ofcolnames()fordata.frameobjects)

Summary:

-

str(interviews)- structure of the object and information about the class, length and content of each column -

summary(interviews)- summary statistics for each column -

glimpse(interviews)- returns the number of columns and rows of the tibble, the names and class of each column, and previews as many values will fit on the screen. Unlike the other inspecting functions listed above,glimpse()is not abase Rfunction so you need to have thetidyversepackage loaded to be able to execute it.

Note: most of these functions are “generic.” They can be used on other types of objects besides data frames or tibbles.

Using functions

We can view the first few rows with the head() function,

and the last few rows with the tail() function:

R

head(interviews)

OUTPUT

# A tibble: 6 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>R

tail(interviews)

OUTPUT

# A tibble: 6 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 192 Chirodzo 2017-06-03 00:00:00 9 20 burntbricks

2 126 Ruaca 2017-05-18 00:00:00 3 7 burntbricks

3 193 Ruaca 2017-06-04 00:00:00 7 10 cement

4 194 Ruaca 2017-06-04 00:00:00 4 5 muddaub

5 199 Chirodzo 2017-06-04 00:00:00 7 17 burntbricks

6 200 Chirodzo 2017-06-04 00:00:00 8 20 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>We used these functions with just one argument, the object

interviews, and we didn’t give the argument a name. In

R, a function’s arguments come in a particular order, and

if you put them in the correct order, you don’t need to name them. In

this case, the name of the argument is x, so we can name it

if we want, but since we know it’s the first argument, we don’t need

to.

Some arguments are optional. For example, the n argument

in head() specifies the number of rows to print. It

defaults to 6, but we can override that by specifying a different

number:

R

head(interviews, n = 10)

OUTPUT

# A tibble: 10 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>If we order them correctly, we don’t have to name either:

R

head(interviews, 10)

OUTPUT

# A tibble: 10 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>Additionally, if we name them, we can put them in any order we want:

R

head(n = 10, x = interviews)

OUTPUT

# A tibble: 10 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>Generally, it’s good practice to start with the required arguments, like the data.frame whose rows you want to see, and then to name the optional arguments. If you are ever unsure, it never hurts to explicitly name an argument.

Aside: Getting Help

To learn more about a function, you can type a ? in

front of the name of the function, which will bring up the official

documentation for that function:

R

?head

Function documentation is written by the authors of the functions, so they can vary pretty widely in their style and readability. The first section, Description, gives you a concise description of what the function does, but it may not always be enough. The Arguments section defines all the arguments for the function and is usually worth reading thoroughly. Finally, the Examples section at the end will often have some helpful examples that you can run to get a sense of what the function is doing.

Another great source of information is package

vignettes. Many packages have vignettes, which are like

tutorials that introduce the package, specific functions, or general

methods. You can run vignette(package = "package_name") to

see a list of vignettes in that package. Once you have a name, you can

run vignette("vignette_name", "package_name") to view that

vignette. You can also use a web browser to go to

https://cran.r-project.org/web/packages/package_name/vignettes/

where you will find a list of links to each vignette. Some packages will

have their own websites, which often have nicely formatted vignettes and

tutorials.

Finally, learning to search for help is probably the most useful

skill for any R user. The key skill is figuring out what

you should actually search for. It’s often a good idea to start your

search with R or R programming. If you have

the name of a package you want to use, start with

R package_name.

Let’s investigate str a bit more.

R

str(interviews)

OUTPUT

spc_tbl_ [131 × 14] (S3: spec_tbl_df/tbl_df/tbl/data.frame)

$ key_ID : num [1:131] 1 2 3 4 5 6 7 8 9 10 ...

$ village : chr [1:131] "God" "God" "God" "God" ...

$ interview_date : POSIXct[1:131], format: "2016-11-17" "2016-11-17" ...

$ no_membrs : num [1:131] 3 7 10 7 7 3 6 12 8 12 ...

$ years_liv : num [1:131] 4 9 15 6 40 3 38 70 6 23 ...

$ respondent_wall_type: chr [1:131] "muddaub" "muddaub" "burntbricks" "burntbricks" ...

$ rooms : num [1:131] 1 1 1 1 1 1 1 3 1 5 ...

$ memb_assoc : chr [1:131] NA "yes" NA NA ...

$ affect_conflicts : chr [1:131] NA "once" NA NA ...

$ liv_count : num [1:131] 1 3 1 2 4 1 1 2 3 2 ...

$ items_owned : chr [1:131] "bicycle;television;solar_panel;table" "cow_cart;bicycle;radio;cow_plough;solar_panel;solar_torch;table;mobile_phone" "solar_torch" "bicycle;radio;cow_plough;solar_panel;mobile_phone" ...

$ no_meals : num [1:131] 2 2 2 2 2 2 3 2 3 3 ...

$ months_lack_food : chr [1:131] "Jan" "Jan;Sept;Oct;Nov;Dec" "Jan;Feb;Mar;Oct;Nov;Dec" "Sept;Oct;Nov;Dec" ...

$ instanceID : chr [1:131] "uuid:ec241f2c-0609-46ed-b5e8-fe575f6cefef" "uuid:099de9c9-3e5e-427b-8452-26250e840d6e" "uuid:193d7daf-9582-409b-bf09-027dd36f9007" "uuid:148d1105-778a-4755-aa71-281eadd4a973" ...

- attr(*, "spec")=

.. cols(

.. key_ID = col_double(),

.. village = col_character(),

.. interview_date = col_datetime(format = ""),

.. no_membrs = col_double(),

.. years_liv = col_double(),

.. respondent_wall_type = col_character(),

.. rooms = col_double(),

.. memb_assoc = col_character(),

.. affect_conflicts = col_character(),

.. liv_count = col_double(),

.. items_owned = col_character(),

.. no_meals = col_double(),

.. months_lack_food = col_character(),

.. instanceID = col_character()

.. )

- attr(*, "problems")=<externalptr> We get quite a bit of useful information here. First, we are told that we have a data.frame of 131 observations, or rows, and 14 variables, or columns.

Next, we get a bit of information on each variable, including its

type (int or chr) and a quick peek at the

first 10 values. You might ask why there is a $ in front of

each variable. This is because the $ is an operator that

allows us to select individual columns from a data.frame.

The $ operator also allows you to use tab-completion to

quickly select which variable you want from a given data.frame. For

example, to get the village variable, we can type

interviews$ and then hit Tab.

We get a list of the variables that we can move through with up and down

arrow keys. Hit Enter when you reach

village, which should finish this code:

R

interviews$village

OUTPUT

[1] "God" "God" "God" "God" "God" "God"

[7] "God" "Chirodzo" "Chirodzo" "Chirodzo" "God" "God"

[13] "God" "God" "God" "God" "God" "God"

[19] "God" "God" "God" "God" "Ruaca" "Ruaca"

[25] "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Ruaca"

[31] "Ruaca" "Ruaca" "Ruaca" "Chirodzo" "Chirodzo" "Chirodzo"

[37] "Chirodzo" "God" "God" "God" "God" "God"

[43] "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo"

[49] "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo"

[55] "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo"

[61] "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo"

[67] "Chirodzo" "Chirodzo" "Chirodzo" "Chirodzo" "Ruaca" "Chirodzo"

[73] "Ruaca" "Ruaca" "Ruaca" "God" "Ruaca" "God"

[79] "Ruaca" "God" "God" "God" "God" "God"

[85] "God" "God" "God" "God" "God" "Ruaca"

[91] "Ruaca" "Ruaca" "Ruaca" "Ruaca" "God" "God"

[97] "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Ruaca"

[103] "God" "God" "Ruaca" "Ruaca" "Ruaca" "Ruaca"

[109] "Ruaca" "Ruaca" "God" "Ruaca" "Ruaca" "Ruaca"

[115] "Ruaca" "Ruaca" "God" "God" "Ruaca" "Ruaca"

[121] "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Ruaca" "Chirodzo"

[127] "Ruaca" "Ruaca" "Ruaca" "Chirodzo" "Chirodzo"Vectors: the building block of data

You might have noticed that our last result looked different from

when we printed out the interviews data.frame itself.

That’s because it is not a data.frame, it is a vector.

A vector is a 1-dimensional series of values, in this case a vector of

characters representing the village name.

Data.frames are made up of vectors; each column in a data.frame is a

vector. Vectors are the basic building blocks of all data in

R. Basically, everything in R is a vector, a

bunch of vectors stitched together in some way, or a function.

Understanding how vectors work is crucial to understanding how

R treats data, so we will spend some time learning about

them.

There are 4 main types of vectors (also known as atomic vectors):

"character"for strings of characters, like ourvillageorrespondent_wall_typecolumns. Each entry in a character vector is wrapped in quotes. In other programming languages, this type of data may be referred to as “strings”."integer"for integers. All the numeric values ininterviewsare integers. You may sometimes see integers represented like2Lor20L. TheLindicates toRthat it is an integer, instead of the next data type,"numeric"."numeric", aka"double", vectors can contain numbers including decimals. Other languages may refer to these as “float” or “floating point” numbers."logical"forTRUEandFALSE, which can also be represented asTandF. In other contexts, these may be referred to as “Boolean” data.

Vectors can only be of a single type. Since each

column in a data.frame is a vector, this means an accidental character

following a number, like 29, can change the type of the

whole vector. Mixing up vector types is one of the most common mistakes

in R, and it can be tricky to figure out. It’s often very

useful to check the types of vectors.

To create a vector from scratch, we can use the c()

function, putting values inside, separated by commas.

R

c(1, 2, 5, 12, 4)

OUTPUT

[1] 1 2 5 12 4As you can see, those values get printed out in the console, just

like with interviews$village. To store this vector so we

can continue to work with it, we need to assign it to an object.

R

num <- c(1, 2, 5, 12, 4)

You can check what kind of object num is with the

class() function.

R

class(num)

OUTPUT

[1] "numeric"We see that num is a numeric vector.

Let’s try making a character vector:

R

char <- c("apple", "pear", "grape")

class(char)

OUTPUT

[1] "character"Remember that each entry, like "apple", needs to be

surrounded by quotes, and entries are separated with commas. If you do

something like "apple, pear, grape", you will have only a

single entry containing that whole string.

Finally, let’s make a logical vector:

R

logi <- c(TRUE, FALSE, TRUE, TRUE)

class(logi)

OUTPUT

[1] "logical"Challenge 1: Coercion

Since vectors can only hold one type of data, something has to be done when we try to combine different types of data into one vector.

- What type will each of these vectors be? Try to guess without

running any code at first, then run the code and use

class()to verify your answers.

R

num_logi <- c(1, 4, 6, TRUE)

num_char <- c(1, 3, "10", 6)

char_logi <- c("a", "b", TRUE)

tricky <- c("a", "b", "1", FALSE)

R

class(num_logi)

OUTPUT

[1] "numeric"R

class(num_char)

OUTPUT

[1] "character"R

class(char_logi)

OUTPUT

[1] "character"R

class(tricky)

OUTPUT

[1] "character"R will automatically convert values in a vector so that

they are all the same type, a process called

coercion.

Challenge 1: Coercion (continued)

- How many values in

combined_logicalare"TRUE"(as a character)?

R

combined_logical <- c(num_logi, char_logi)

R

combined_logical

OUTPUT

[1] "1" "4" "6" "1" "a" "b" "TRUE"R

class(combined_logical)

OUTPUT

[1] "character"Only one value is "TRUE". Coercion happens when each

vector is created, so the TRUE in num_logi

becomes a 1, while the TRUE in

char_logi becomes "TRUE". When these two

vectors are combined, R doesn’t remember that the 1 in

num_logi used to be a TRUE, it will just

coerce the 1 to "1".

Challenge 1: Coercion (continued)

- Now that you’ve seen a few examples of coercion, you might have started to see that there are some rules about how types get converted. There is a hierarchy to coercion. Can you draw a diagram that represents the hierarchy of what types get converted to other types?

logical → integer → numeric → character

Logical vectors can only take on two values: TRUE or

FALSE. Integer vectors can only contain integers, so

TRUE and FALSE can be coerced to

1 and 0. Numeric vectors can contain numbers

with decimals, so integers can be coerced from, say, 6 to

6.0 (though R will still display a numeric 6

as 6.). Finally, any string of characters can be

represented as a character vector, so any of the other types can be

coerced to a character vector.

Coercion is not something you will often do intentionally; rather,

when combining vectors or reading data into R, a stray

character that you missed may change an entire numeric vector into a

character vector. It is a good idea to check the class() of

your results frequently, particularly if you are running into confusing

error messages.

Missing data

One of the great things about R is how it handles

missing data, which can be tricky in other programming languages.

R represents missing data as NA, without